Yes, web crawling is not just useful; it is the foundation of how the internet functions as a searchable system. Without web crawlers, search engines would not be able to discover websites, analyze content, or organize the vast amount of information available online.

Think of the internet as a massive global library with billions of books scattered across endless shelves. Web crawlers act like tireless librarians that constantly explore the library, catalog new books, update old entries, and record connections between different topics.

These automated programs continuously scan websites, follow links, and collect information that allows search engines to index pages and deliver relevant results to users.

In fact, according to Google Search Central, Google’s search index contains hundreds of billions of webpages, and most of them are discovered through crawling processes performed by Googlebot.

Beyond search engines, web crawling is widely used in industries such as market research, price monitoring, data aggregation, competitive intelligence, and brand monitoring.

So the real question is not whether web crawling is useful—it is how critical it has become for SEO, business intelligence, and digital innovation. Learn how web crawling fuels SEO strategies, boosts visibility, and even drives entire business models.

What Is Web Crawling?

Web crawling is an automated process in which software programs systematically browse websites to discover, analyze, and index web pages.

A web crawler starts with a list of known URLs and then follows hyperlinks on each page to discover new pages. This process allows search engines to continuously map the structure of the internet and keep their search indexes updated.

Common examples of web crawlers include:

- Googlebot (used by Google)

- Bingbot (used by Microsoft Bing)

- SEO crawler tools like Screaming Frog or Sitebulb

These crawlers analyze website content, structure, and links to understand how pages relate to each other.

Without web crawling, search engines would have no way to discover new websites or track updates to existing content.

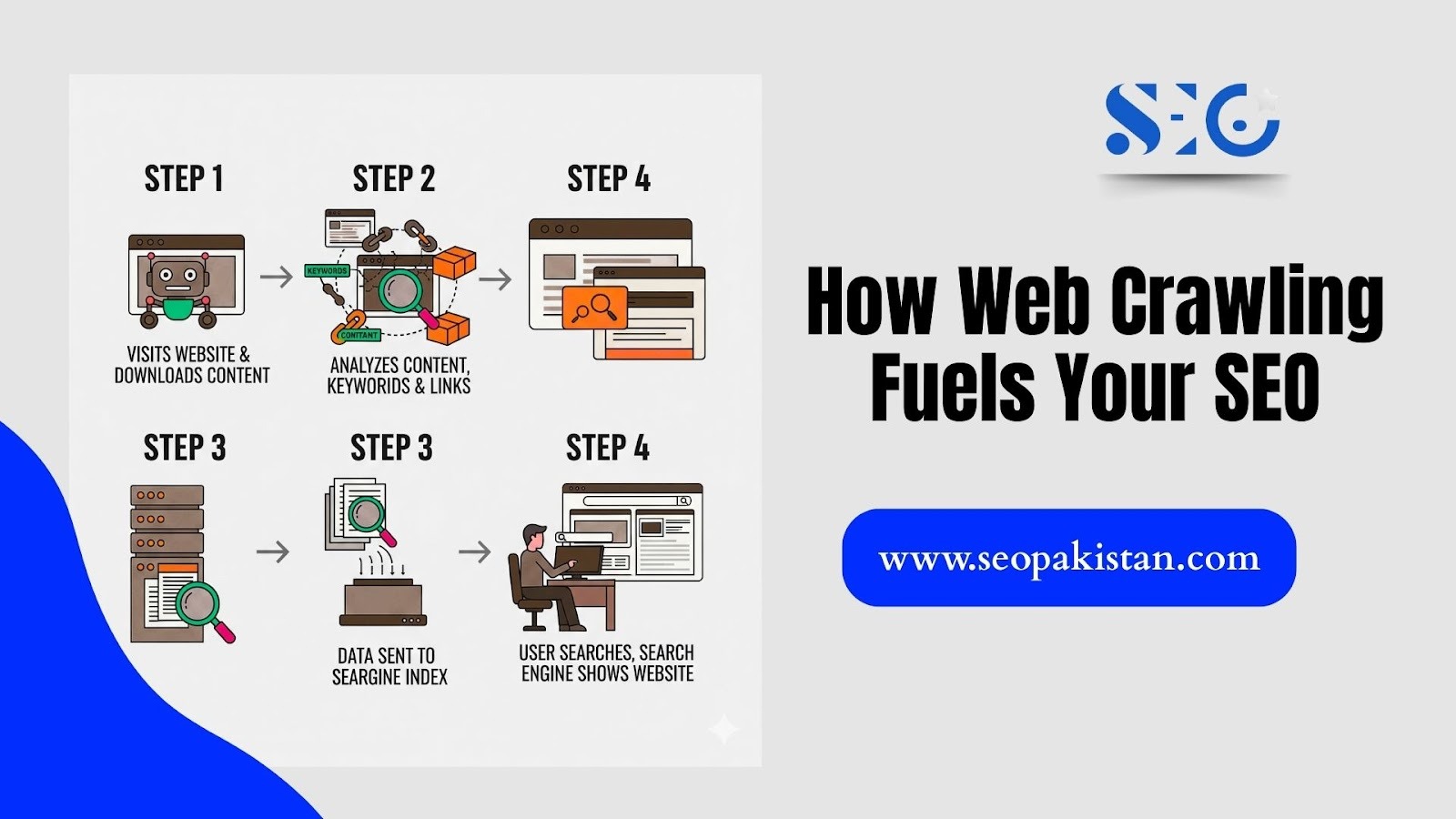

How Web Crawling Works

Web crawling follows a systematic process to explore the internet and find new content. This process is continuous, as crawlers constantly revisit pages to check for updates. The goal is to efficiently discover, download, and index web content so search engines can provide relevant results.

Step 1: Starting with Seed URLs

Every crawling process begins with a set of seed URLs. These are initial web addresses that serve as starting points for the crawler.

Seed URLs may come from:

- previously indexed pages

- XML sitemaps

- submitted URLs

- trusted websites

From these initial web addresses, crawlers branch out to explore other pages on the internet.

Step 2: Downloading and Analyzing Pages

Once a crawler accesses a webpage from the seed list or crawl queue, it begins to download the page’s content and resources. Think of it like a browser loading a website for you. This includes:

- HTML content

- Images

- CSS files

- JavaScript

The crawler then analyzes the page to understand:

- The main content

- Page structure

- Keywords and topics

- Internal and external links

This information helps search engines determine how relevant the page is to different search queries.

Step 3: Discovering New Links

Crawlers extract all hyperlinks found on a page and add them to a crawl queue. These links lead the crawler to additional pages, creating a continuous cycle of discovery across the internet.

This process allows crawlers to:

- Discover new websites

- Identify updated content

- map relationships between web pages

This cycle enables search engines to maintain a vast, ever-growing index of billions of pages. The more effectively a crawler can navigate your site, the more comprehensively it will be indexed.

The Primary Benefit: How Web Crawling Fuels Your SEO

For digital marketers and website owners, understanding “Is Web Crawling Useful” is key to unlocking search engine optimization success. Web crawling forms the foundation of online visibility, making it essential for anyone serious about improving their presence online.

Enabling Search Engine Indexing

Crawling is the first step in the search engine indexing process. If a search engine crawler cannot access your website, your pages cannot be indexed.

Without indexing, your website will not appear in search results, regardless of how good the content is. This makes website crawlability one of the most important technical SEO factors.

Analyzing Content and Signals

Modern crawlers perform sophisticated analysis that extends far beyond simple content collection:

- Content Relevance: Crawlers read and interpret your text, analyzing keywords, topics, and semantic relationships to understand what your pages are about

- Link Authority: They follow both internal and external links to assess your site’s authority and trustworthiness within your industry or niche

- Site Structure: Crawlers map your website’s architecture to understand page hierarchy and ensure important content remains easily accessible

The Speed Advantage

Websites optimized for efficient crawling enjoy faster indexation of new content. This speed advantage translates directly into quicker rankings for fresh pages and updates, giving you a competitive edge in rapidly evolving markets.

Crawl Budget Optimization

Search engines allocate a crawl budget, which determines how many pages of your website they will crawl during a specific period.

Large or poorly structured websites may waste crawl budget on:

- Duplicate pages

- Broken links

- Unnecessary parameters

- Redirect chains

- Low-quality content

Optimizing your website’s structure helps search engine crawlers focus on your most important pages. A clean, logical structure guides them away from less valuable content, making better use of your allocated crawl budget.

Evaluating Website Structure

Search engine crawlers also look at your site’s architecture to understand its layout and how different pages relate to each other. A well-organized site is easier for them to navigate, which can improve how your pages are indexed and ranked.

Crawlers analyze the architecture of your website, including:

- Internal linking

- Page hierarchy

- Navigation structure

A well-structured website is easier for crawlers to explore, which improves indexing and search visibility. This ensures that users can find your content more easily through search engines.

Key Business Uses of Web Crawling

Web crawling powers numerous business applications that extend far beyond search engine optimization. These diverse use cases demonstrate the technology’s versatility and commercial value.

| Use Case | Core Objective | Key Data Extracted |

| Competitive Analysis | Monitor competitor strategies | Pricing, product descriptions, and content topics |

| Market Research | Identify market trends & consumer demand | Product reviews, user feedback, and new feature releases |

| Data Aggregation | Build searchable databases | Job listings, flight schedules, real estate data |

| Brand Monitoring | Protect brand reputation | Mentions on forums, social media, news sites |

| Security Audits | Identify website vulnerabilities | Scripts, links, and forms that could be exploited |

For example:

- Travel websites crawl airline sites to compare ticket prices.

- Job platforms crawl company career pages to collect job listings.

- Price comparison tools track thousands of e-commerce websites.

So, is web crawling useful? These applications demonstrate how it powers entire digital business models.

A Crucial Distinction: Web Crawling vs. Web Scraping

Many people confuse web crawling with web scraping, but understanding the distinction is essential for ethical and legal data collection practices.

- Web Crawling focuses on exploration and discovery. The process is systematic and comprehensive, aimed at creating a complete map of web content. Ethical crawlers respect robots.txt files and follow polite crawling practices to avoid overwhelming servers.

- Web scraping targets specific data extraction. The process is surgical, designed to pull predetermined data points like product prices or contact information from specific pages. Scraping can be more aggressive and may violate terms of service if implemented without proper consideration.

Think of crawling as exploring a library to create a comprehensive catalogue system. Scraping, by contrast, involves going directly to specific books to copy particular paragraphs or data points for immediate use.

Best Practices for an Optimized Crawling Strategy

Successful crawling requires strategic planning and adherence to established protocols. These guidelines help keep your crawling strategies both efficient and ethical.

Respect the Rules

Always honor robots.txt directives and implement polite crawling practices. Respect rate limits, avoid overwhelming servers, and follow website terms of service. Ethical crawling builds sustainable data collection processes.

Ensure Accessibility

Design your website’s internal linking structure and navigation to be crawler-friendly. Create logical hierarchies, implement XML sitemaps, and ensure important pages remain within a few clicks of your homepage.

Use the Right Tools

Modern SEO platforms offer crawler simulation tools that help identify technical issues before they impact your search visibility. These tools can reveal broken links, orphaned pages, and crawlability problems.

Monitor and Analyze

Leverage Google Search Console to track crawl statistics, identify errors, and monitor indexation status. Regular analysis helps you optimize your site’s crawlability and resolve issues quickly.

Create an XML Sitemap

An XML sitemap provides a structured list of your website’s important pages. Submitting your sitemap through Google Search Console helps search engines find and index your content more efficiently.

Is Your Site Invisible to Google? Common Crawling Issues You Need to Fix

While web crawling is essential for search engine visibility, several technical hurdles can prevent search engines from effectively exploring and indexing your site. These obstacles can hinder your website’s performance in search results, making it harder for users to find your content.

Here are some common web crawling challenges and how they can impact your site:

- Blocked Pages: Instructions in your website’s robots.txt file can accidentally block crawlers from accessing important pages, making them invisible to search engines.

- Duplicate Content: When multiple pages have the same or very similar content, it confuses crawlers, diluting your ranking potential and splitting traffic between identical pages.

- Slow Website Speed: A slow-loading website can exhaust a crawler’s budget, meaning it may leave your site before it has indexed all your important content.

- Broken Internal Links: Dead-end links prevent crawlers from discovering other pages on your site, creating roadblocks in the crawling process and harming user experience.

- Poor Website Architecture: A confusing or disorganized site structure makes it difficult for crawlers to navigate and understand the relationship between your pages, which can lead to incomplete indexing.

By proactively identifying and addressing these common issues, you can enhance your site’s crawl efficiency. By addressing these issues, you’ll make it easier for search engines to index your pages accurately. This simple step answers the question “is web crawling useful?” with a clear yes, as it directly improves your overall search performance and visibility..

Conclusion

Web crawling represents far more than a technical process; it’s a fundamental digital asset that powers modern business intelligence and online visibility. From enabling search engines to helping businesses monitor competitors and market trends, crawling technology drives informed decision-making across industries.

Is web crawling useful? Definitely, dedicated digital marketers must understand and optimize for web crawling. It ensures your content gets discovered, keeps your competitive intelligence up-to-date, and helps your business stay ahead of market changes.

Utilizing the power of web crawling is a strategic decision that transforms raw digital information into actionable business insights and measurable results. Visit SEOPakistan.com to learn more and get started today!

Frequently Asked Questions (FAQs)

How to check for crawlers?

To determine if a web crawler is accessing your site, review your server logs. Google Search Console’s “Crawl Stats” report also provides detailed information on Googlebot’s activity on your site.

Do all websites allow crawling?

No. Websites can use a robots.txt file to block crawlers from accessing specific parts of their site, often to prevent sensitive or irrelevant content from being indexed.

Does crawling improve performance?

Yes. By running regular crawls on your own site, you can identify technical issues like broken links, duplicate content, or slow pages, which can then be fixed to improve both SEO and user experience.

Is crawling legal?

Web crawling is legal as long as it is done ethically. This means respecting robots.txt files, avoiding server overload, and not extracting private or copyrighted data.

Why is crawling important for SEO?

Crawling is the first step for SEO. If a search engine crawler cannot access your site, your pages won’t be indexed and cannot appear in search results, making them invisible to organic traffic.